Curious, patient, & stubborn

Over the recent holiday break, I built a browser game with my kids called Alien Abducto-rama.

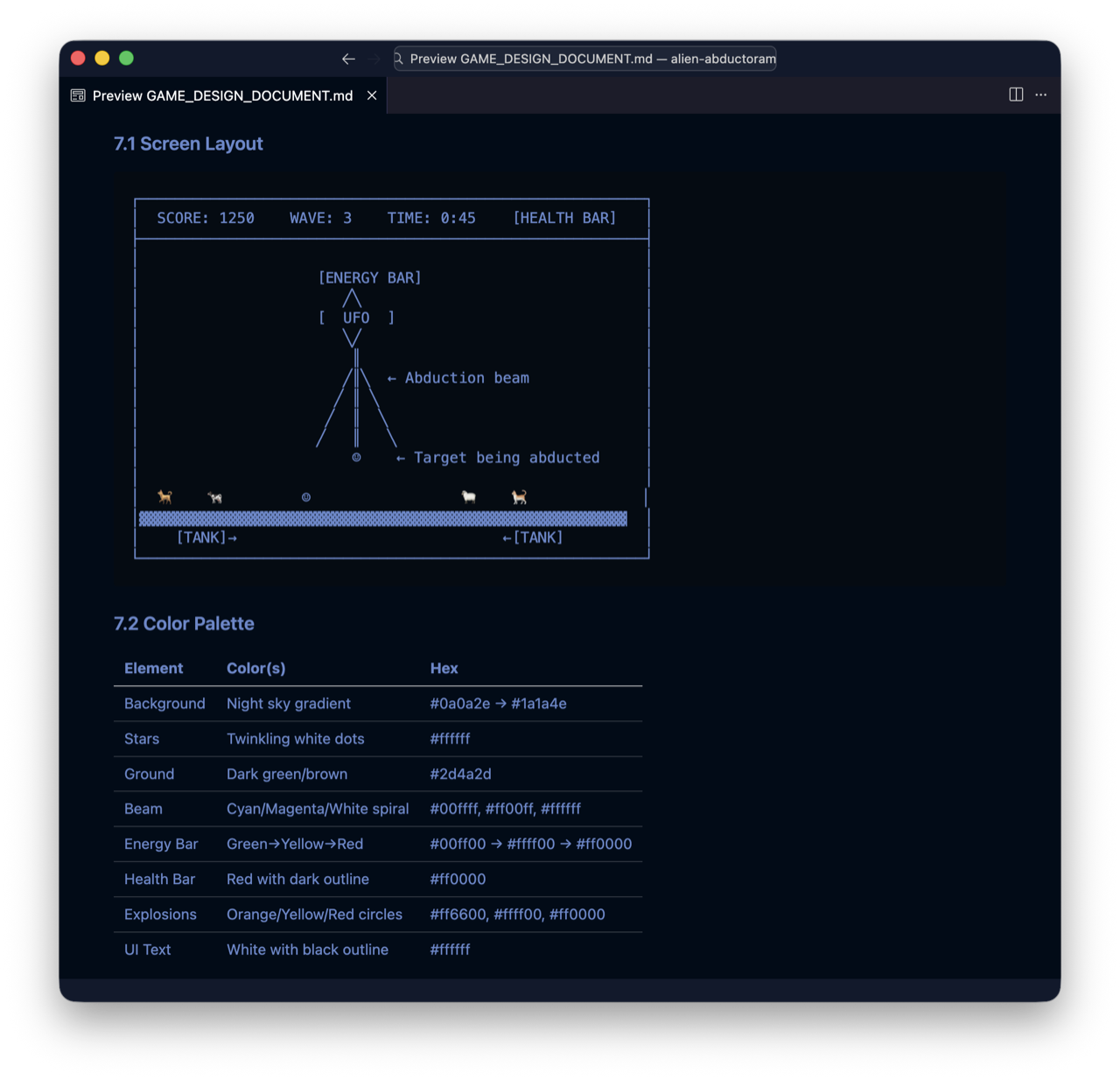

It started the simplest way possible. We sat down, brainstormed ideas, and landed on an alien abduction game—you pilot a UFO and beam up humans for points. While my kids sketched everything we’d need, I opened Claude Code and started talking through a rough game design spec. Nothing fancy, just describing what the game should feel like, how scoring might work, what the player does minute to minute.

Once we had an outline, we read it out loud together. My kids complained about things that felt boring or unfair. We tweaked mechanics, added ideas, and debated until it felt right. With the design spec done and the drawings digitized, I piped the whole thing into Claude and watched it assemble the foundation of the game.

Of course, it didn’t come out exactly how we wanted. So I showed my kids how to use voice dictation to talk to Claude directly. We described problems, asked for changes, and watched it rewrite parts of the game in real time. Explosions appeared. Sound effects landed. Power-ups got layered in.

A few weeks later I got Gastown running locally and decided to try something small but real: a classic arcade-style leaderboard with top scores and three-letter initials.

I wrote up a plan and bounced it back and forth between Codex and Claude Code until it felt solid, then handed the final version to the Gastown Mayor, plugged in my laptop, made sure it wouldn’t go to sleep, and went to bed.

When I woke up, there was a cascade of fresh commits in the repo. Gastown had autonomously figured out how to persist the data, design the UI, divide the work, wire everything together, and deploy it to the internet.

I invited people to play the game, try to beat my score, and tell me what was broken or boring or missing. People showed up and started to push on it, bend it, find the boundaries I had never imagined.

The East Coast recently got hammered with ice and snow. School was closed for over a week, everyone was stuck at home. So we did what any reasonable family would do: we went through the feedback and started shipping features.

Someone asked for a way to stun tanks, so now if you lift one more than a third of the way up and drop it, it gets temporarily disabled with little stars spinning around its head. Someone else asked for boss levels—we gave them an arms dealer instead, in the form of a UFO Shopping Mall where you can buy power-ups, bombs, and a laser turret between waves. Another player wanted more maneuverability, so we added a warp juke that lets you dodge incoming fire. My kids spent about twenty minutes tuning the abduction sounds, beaming things up over and over to get the pitch shift just right. The leaderboard now shows the top 100 scores.

We also shipped an in-game feedback system so players can rate their experience and submit suggestions. So far we’ve collected 40 responses, averaging 4.5 out of 5 for enjoyment, 4.5 for difficulty, and 4.1 for replayability.

Then a player named CHK discovered the game and proceeded to claim over 80% the top 100 leaderboard spots. They’ve been playing obsessively for over a week now, with a current high score of 39,248 points at Wave 12. When I was building this, I assumed Wave 7 was about as far as anyone could reasonably get. Watching someone blast past that into territory I never even play-tested has been one of the great joys of this whole project. They found the edge of the game and leaned over it!

Most of the feedback was specific and shippable, but one request kept surfacing that I couldn’t just prompt through: people wanted the characters animated. Walking, idling, reacting. Not just sliding across the screen but actually moving.

The original art came from my kids’ paper drawings. I photographed and digitized them, then cut backgrounds out in Affinity Designer, but animating that way was brutally tedious. What I wanted was a paper-doll workflow: split characters into parts, set pivots, rotate limbs, generate frames. Ruby asked the obvious question: why not just build the animation tool ourselves?

So I drafted a spec, had Claude generate HTML mockups, made them interactive, and iterated until the workflow felt right. Then came the hard part: using it, finding where it broke, fixing bugs, abandoning dead ends (including a failed skeletal-system attempt), and grinding through the complexity of twenty-plus layered parts per character. We called the tool Mr. Animation.

Eventually it clicked. The paper-doll model stabilized, exports worked, and Mr. Animation became good enough to use in production (still rough, but usable). You can try it out here.

I used it to create all the in-game animations, then added a build step that auto-detects assets, sorts frame order, and wires everything into the game.

Then Claude 4.6 showed up, and the whole scene bent sideways. I followed the white rabbit down a tunnel of code, and somewhere between Wonderland and Better Judgment, I kept going and stopped worrying about finding my way back.

It started with the game’s HUD. The health bars, the energy meters, the wave counter—they were fine, they worked, but they didn’t feel like anything. I kept thinking about Neon Genesis Evangelion, specifically about how that anime portrayed its human/computer interfaces. The UI in Evangelion breathes, screens flicker and pulse and layer information in ways that feel organic, almost biological. Like the technology itself is a living organism trying to communicate with you. This was 1995, way before anyone was talking about AI the way we talk about it now, but the show was right on: what happens when you merge human consciousness with something vastly more powerful than you? What gets lost in that merge? What gets found?

I realized I could actually describe this to Claude. Not “make the HUD look cool” but the specific quality of it—the overlapping translucent layers, the way critical information pulses with urgency, the way everything feels like it’s running on something alive rather than something manufactured. And because Claude knows more about Evangelion than I do, we could go back and forth. I’d describe a moment from the show, it would confirm, ask followup questions, and somewhere in that exchange the real idea would crystallize. Every pass through that loop sharpened both the explanation and my understanding of what I actually wanted.

That’s when something clicked about how this whole process works. The bottleneck isn’t execution anymore. The models handle that part faster and often better than I ever could. The bottleneck moved upstream to explanation—how richly you can describe the thing living in your head, how precisely you can name the reference, the interaction pattern, the way something should feel.

And that richness comes from everywhere you’ve ever been. Every game you played obsessively, every anime you watched too many times, every piece of software that made you feel something. All of that accumulated experience becomes raw material for explanation, and explanation is what makes the machine go. Once I saw that clearly, the work stopped feeling like a cosmetic pass and started feeling like walking through a doorway into a different room.

I started describing the mechanics of games I loved—Warcraft’s tech trees, Factorio’s automation chains—and that reframed the whole project. It wasn’t just about beaming things up for points anymore. It became about harvesting Biomatter and automating that harvest.

To make that real, I built drones as small robotic spider units that roam the ground under your UFO. Harvester drones crawl the map and beam up Biomatter for you, but they can’t protect themselves. So I added attack drones that patrol the ground to clear threats while harvesters keep working. Both are disposable units with fuel limits, damage states, and finite lifespans.

Then came drone coordinators that auto-deploy and replace both drone types, so the loop doesn’t depend on mashing a button every time one goes down. All of it plugged into the tech tree: Biomatter became the currency, research unlocked new automation layers, and the game took a much more interesting turn.

Then I got really into anime-style missiles, especially the kind in Macross Plus that fan out in wide arcs, trail smoke, and erupt in semi-random bursts. That was the reference image stuck in my head.

Then, I spent hours over several days, describing exactly how the missile behavior should work in this world. How the smoke trails should fade. How they should tie into the HUD’s energy system, draining power as they launch. How the explosion radius should feel and what type of damage gets inflicted. Each missile group consumed energy differently, and that energy had to regenerate, and the regeneration rate tied into your tech tree choices, and all of it had to be visible in the Evangelion-inspired HUD that was still trying to feel alive.

I kept going until I hit the next frontier. I started thinking about what happens after you’ve mastered drones and coordinators and missiles. The answer felt obvious once I found it: you get your boss’s job. You stop managing the beaming and the drones and the coordinators, and instead you manage other UFO pilots who have to make that same transition themselves. A whole layer above. I took days to describe what that should feel like, then built it and wired it into the existing game. Right now, if you complete the full tech tree, the game transitions into Phase Two. It’s real and playable, but still unfinished. Building that layer also changed how I build: instead of stuffing more features into a single massive file, I split systems into smaller pieces so I could work on multiple parts in parallel and move faster.

I kept going and going. I’d build something, play it, feel where it was wrong, describe the wrongness as precisely as I could, watch it get rebuilt, play it again. The feedback loop between my ability to articulate and the machine’s ability to execute got so tight that the quality of my explanations became, directly and measurably, the quality of the output. Garbage in, garbage out. But also: richness in, richness out. The more specific and heartfelt and weird and personal the explanation, the better the thing that came back.

There’s this thing that happens when you watch a movie after reading a book. You sit there thinking, that’s not how I saw it. That’s not what I thought it would look like. Your imagination had filled in every gap with something personal and specific and yours, and the movie just picked different gaps to fill.

Building this game was the opposite of that feeling. For the first time, I could fill in the gaps myself. I could go to absurd lengths to describe and make comparisons, get into the minutiae of tiny, stupid details, and then watch those details show up on screen. And when they didn’t quite match what was in my head, I could explain the difference and watch it converge. It was like the distance between imagining software and holding it kept shrinking until it was barely there.

It was so satisfying I couldn’t stop. I poured weeks of free time into this world: evenings after the kids went to bed, mornings before anyone woke up. I’d describe what felt wrong, watch it change, describe it again, and keep going until the gap between imagination and reality got smaller and smaller.

That’s what shifted for me. The bottleneck moved from typing to explanation: your voice, your taste, your memory, your willingness to keep rephrasing until the thing in your head exists outside of it. And those instincts didn’t come from the models. They came from years of games, anime, software, and reps.

When I close the laptop and walk away, I feel proud. The game isn’t perfect. It’s rough, overbuilt, and full of half-successes. But it’s unmistakably mine. The door is open now. If you are patient enough to sit with the problem, curious enough to keep asking questions, and stubborn enough to push through, you’ll find that the only limit left is what you can imagine.